Publications

publications in reversed chronological order.

2026

-

Solaris: Building a Multiplayer Video World Model in MinecraftGeorgy Savva, Oscar Michel, Daohan Lu, and 6 more authorsUnder Review, 2026

Solaris: Building a Multiplayer Video World Model in MinecraftGeorgy Savva, Oscar Michel, Daohan Lu, and 6 more authorsUnder Review, 2026Existing action-conditioned video generation models (video world models) are limited to single-agent perspectives, failing to capture the multi-agent interactions of real-world environments. We introduce Solaris, a multiplayer video world model that simulates consistent multi-view observations. To enable this, we develop a multiplayer data system designed for robust, continuous, and automated data collection on video games such as Minecraft. Unlike prior platforms built for single-player settings, our system supports coordinated multi-agent interaction and synchronized videos + actions capture. Using this system, we collect 12.64 million multiplayer frames and propose an evaluation framework for multiplayer movement, memory, grounding, building, and view consistency. We train Solaris using a staged pipeline that progressively transitions from single-player to multiplayer modeling, combining bidirectional, causal, and Self Forcing training. In the final stage, we introduce Checkpointed Self Forcing, a memory-efficient Self Forcing variant that enables a longer-horizon teacher. Results show our architecture and training design outperform existing baselines. Through open-sourcing our system and models, we hope to lay the groundwork for a new generation of multi-agent world models.

2024

-

HuDOR: Bridging the Human to Robot Dexterity Gap through Object-Oriented RewardsIrmak Guzey, Yinlong Dai, Georgy Savva, and 2 more authorsICRA 2025, 2024

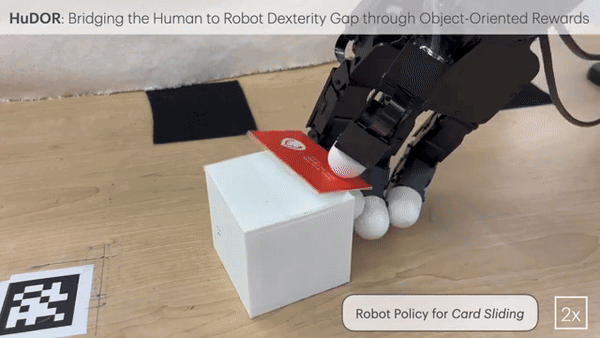

HuDOR: Bridging the Human to Robot Dexterity Gap through Object-Oriented RewardsIrmak Guzey, Yinlong Dai, Georgy Savva, and 2 more authorsICRA 2025, 2024Training robots directly from human videos is an emerging area in robotics and computer vision. While there has been notable progress with two-fingered grippers, learning autonomous tasks without teleoperation remains a difficult problem for multi-fingered robot hands. A key reason for this difficulty is that a policy trained on human hands may not directly transfer to a robot hand with a different morphology. In this work, we present HUDOR, a technique that enables online fine-tuning of the policy by constructing a reward function from the human video. Importantly, this reward function is built using object-oriented rewards derived from off-the-shelf point trackers, which allows for meaningful learning signals even when the robot hand is in the visual observation, while the human hand is used to construct the reward. Given a single video of human solving a task, such as gently opening a music box, HUDOR allows our four- fingered Allegro hand to learn this task with just an hour of online interaction. Our experiments across four tasks, show that HUDOR outperforms alternatives with an average of 4× improvement. Code and videos are available on our website https://object-rewards.github.io/.